Research project: Make like a bat

How echoes can be used by blind people for spatial awareness and navigation.

How echoes can be used by blind people for spatial awareness and navigation.

For most people, vision is the most important sense for spatial awareness (knowing what is in the environment) and navigation (moving safely through the environment). For blind people however, their ears are their eyes. For them, sound and hearing are critical for spatial awareness, navigation and independence.

Inspired by the high-profile cases of blind people who echolocate (for example, see here), we are interested in how blind, and perhaps even sighted, people can use echoes for detecting, localizing and discriminating objects. We are also interested in how hearing loss affects this ability and how assistive devices can be used to maximize blind people's access to, and use of, sounds that can help them to be independent.

Sample lecture on human echolocation

Youtube lecture 'How our ears can help us see'

For technical information visit the Human Echolocation website

Daniel's interest in human echolocation research was sparked by his involvement with a large, multi-centre project called the BIAS Project (see here). BIAS stands for Bio-Inspired Acoustic Systems. The project brought together scientists, engineers and clinicians from around the UK with the aim of learning from animals that use sounds to make a living in order to solve human problems, such as sensing and controlling teams of robots, detecting underwater mines and diagnosing diseases.

The principle investigator of the BIAS Project was University of Southampton's Professor Bob Allen. His specific research was to learn more about echolocation from bats and dolphins to develop improved forms of SONAR. Having heard that echolocation is used by blind people, Bob thought that there was something to learn from humans too. Bumping into each other on day on the stairs of ISVR, Bob and Daniel got chatting about the problem and decided to work together to find out more about human echolocation.

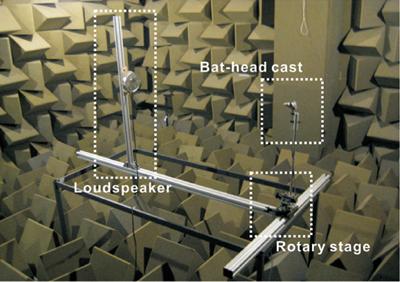

Bob, Daniel and PhD student, now Dr Suyeon Kim, investigated the acoustic signals arriving at the eardrums of a cast of a bat's head (see image) when ultrasonic sounds are used to produce echoes from small objects.

Using exquisitely sensitive recording equipment located in one of ISVR's anechoic chambers, they recorded echoes up to 100 kHz with a variety of head-object geometries and distances. One intriguing question they considered was why bats drop the frequency of their echo-calls as they approach objects, such as prey. Using acoustical analysis and a computer simulation of the auditory processing taking place in the bat's cochlea, they showed that one reason bats might do this is to improve their perception of object distance, and to maintain a constant relationship between the actual and perceived left-right location of an object, at close range.

Humans, bats and dolphins have in common a specialized set of brain processes for using sounds to locate the sound source. One advantage that bats and dolphins have over humans is a highly evolved and developed system for producing vocalizations suitable for eliciting useful echoes. Daniel and BIAS-project acoustics researcher Dr Timos Papadopoulos set about separating the processes of producing and receiving echoes in order to find out potential for humans to use echoes, for example if assisted in producing them.

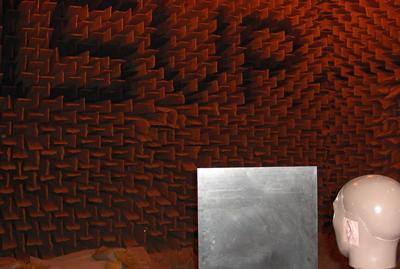

As part of his PhD, Dr David Edwards made a large set of recordings of the sounds produced from a reflective object in various positions relative to a dummy head in ISVR's large anechoic chamber (see image). MSc and BSc students Amanda Goodhew and Leah Evans, respectively, repeated this with a wider range of reflective objects, including large and small boards and a human.

These recordings were used to construct a ‘virtual auditory space', whereby sounds are played back to people over headphones to give the same impression as if the person had been sitting in front of the object. This technique also removes unwanted clues that occur in ‘real' physical arrangements.

Acoustical analysis, auditory modeling and numerous experiments carried out by these and other audiology students (including Hannah Holmes, Anna Hollingdale and Beki White) have demonstrated unambiguously that blind and sighted people can use echoes to locate objects, under certain conditions.

This ability has physical and perceptual limits. Physical limits include the reduction in the volume of the echo as the board is moved farther from the head. This effect also depends on the orientation of the object. A perceptual limit is related to processes in the brain that use information in the echo across different frequencies sub-optimally, such that it is sometimes better to avoid energy in the echo-producing sound below about 2 kHz. However, we also know that the perceptual processes involved in echolocation, and the optimal emissions, depend on specific echolocation task.

Press releases

https://www.southampton.ac.uk/engineering/news/2013/05/20_echolocation.page

Radio

BBC World Service- http://www.bbc.co.uk/programmes/p01b0ryf

TV documentaries

US Discovery Channel documentary on echolocation

Call of the sea helps the blind -http://www.itv.com/news/meridian/update/2013-06-19/call-of-the-sea-helps-the-blind/

Articles

BBC News, 7th June 2013, See online article here

Daily Echo, 10th June 2013, See online article here

News Nation, 22nd May 2013, See online article here

Hindustan Times, 20th May 2013, See online article here

Daily Mail, 21st May 2013, see online article here

Nature World News, 20th May 2013, see here

Science Daily, 20th May 2013, see here

Optometry Today, 22nd May 2013, see here

Trinidad and Tabago Guardian, 11th June 2013, see here

Also reported on the front page of the Daily Telegraph, 21st May 2013

(see image below)

Audiology at Southampton - https://www.southampton.ac.uk/audiology/

Further information on blind echolocation - http://www.worldaccessfortheblind.org/

Further information on hearing and visual loss - http://www.sense.org.uk/

RNIB - http://www.rnib.org.uk/Pages/Home.aspx