Research Round-Up

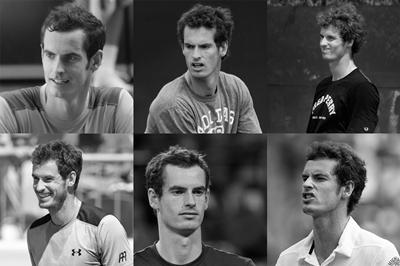

A brief summary of a few recent articles that caught our eye. This time, we are highlighting 3 articles on how humans and machines cope with within-person variability. Within-person variability refers to how an individual’s face or voice can vary from one instance to the next due to factors such as expression, health/illness, age, etc. For instance, the photos to the left all depict Andy Murray but with variability in age, expression, lighting, and pose. Understanding how humans and computer algorithms cope with within-person variability will be essential for improving the accuracy of biometric analysis.

Average faces improve facial recognition for humans and machines

One of the challenges for both humans and computer facial recognition algorithms is how to cope with variability in images of the same person. This challenge is made even more difficult for low-quality images, such as those obtained from CCTV footage. One way to address the challenge posed by variability is to use a simple averaging procedure to combine multiple images of the same person. The rationale behind this process is that averaging multiple images of the same person together should preserve the features that are diagnostic of identity (the signal) while removing sources of variability unrelated to identity (the noise). In three experiments, Ritchie and colleagues (2018) tested whether these face averages can improve human and computer facial recognition relative to single images, comparing the performance of humans (Experiment 1), a smartphone app (Experiment 2), and a commercially available face recognition system (Experiment 3). Across all experiments, there was a benefit for face averages particularly for low-quality pixelated images. These findings have important implications for the identification of individuals from low-quality CCTV footage.

Face averaging improves detection of faces in a crowd

On the topic of averages, a very recent study investigated whether there is a benefit of face averages when searching for a face in a crowd. In three experiments, participants were presented with either a single facial photograph of a target individual, an average of 19 facial photographs of the target, or multiple photographs of the target and subsequently asked to pick that target out of an array of 20 different faces. Compared to a single image, accuracy was significantly higher when participants were exposed to the face average and multiple images. Interestingly, accuracy was also higher when participants were presented with multiple images compared to the face average for unfamiliar faces. Thus, exposure to within-person variability (multiple images of the target identity) can improve human ability to identify unfamiliar target faces in a crowd. These findings have implications for police operations in which one or more target individuals must be identified from a crowd. In these cases, exposure to within-person variability through exposure to multiple images of the target may improve recognition.

Variability in speech style affects human and machine voice recognition

Our voices can also vary from one instance to the next depending context in which we are speaking. In a recent study, Park and colleagues (2018) investigate how humans and machines cope with within-person variability in speech style for short (< 2 second) phrases. Humans and machine algorithms were tested on a voice matching task that involved deciding whether pairs of voice clips belonged to the same person or to two different people. Adding to the challenge, the voice clips differed in speech style, with some pairs consisting of two scripted speech clips (matched) while others consisted of one scripted speech clip and one pet-directed speech1 clip (mismatched). Results showed worse human performance for mismatched compared to matched speech styles. However, humans still outperformed machine algorithms for the majority of voices. This discrepancy between human and machine performance could occur for two reasons: (1) humans relied on acoustic cues not available to the machine algorithms tested, or (2) humans and machines rely on the same cues but use them in different ways. Further research into the strategies that humans use to cope with within-person variability may help to improve machine performance.

1Pet-directed speech describes the way people speak to pets, which typically consists of higher pitch, slower tempo, and exagerated prosody compared to normal speech. A similar type of speech pattern is used for talking to infants (infant-directed speech).