Research project: Fundamentals of spatial audio reproduction

Improving understanding of the physical and psychoacoustic principles of spatial audio reproduction for next generation systems.

Improving understanding of the physical and psychoacoustic principles of spatial audio reproduction for next generation systems.

What is spatial audio reproduction?

Spatial audio reproduction is the process of recreating the impression of a real or imagined sound environment including perceived spatial information such as the location of objects and the spatial qualities of room acoustics. Humans, and other animals, have evolved sophisticated spatial hearing capabilities in order to sense useful information about the environment.

Spatial reproduction can be over loudspeakers or headphones. Successful reproduction strategies combine knowledge of physical acoustics with the psychology of hearing - psychoacoustics. The earliest spatial audio reproduction appeared in the late 19th century soon after electroacoustic devices were developed. Advances in theory and signal processing have led to a variety of reproduction systems and design principles, appropriate for different scenarios. Progress today is more rapid than ever.

This project strand is investigating the fundamental principles of audio reproduction, and developing improved methods. The work is being supported by the EPSRC Programme Grant S3A: Future Spatial Audio for an Immersive Listener Experience at Home (EP/L000539/1), and the BBC as part of the BBC Audio Research Partnership. Current areas of enquiry are as follows:

Localization in general fields

Audio reproduction often involves the creation of sound-fields that significantly depart from the ideal target field. The psychoacoustic significance of this error varies depending on the reproduced field. We seek to better understand the physical and psychoacoustic aspects so that reproduction quality can be measured and reproduction can be improved.

Localization in panned fields, effect of head rotation

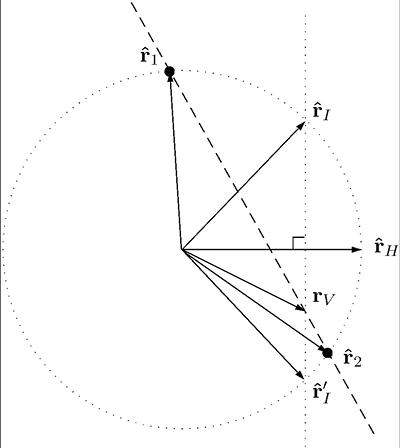

Amplitude panning is the process of sending identical signals with various gains to a few loudspeakers. The listener receives the signals in phase from different directions, and perceives a single image. If the listener is facing the image the image direction is determined by the so-called Makita vector which is a function of the speaker directions and gains. We extend this for a listener facing away from the image. This has implications for improving panned image stability and coverage.

Relating different panning systems

Frequently reproduction systems use ‘panning curves’ to describe how the signal gain for each loudspeaker varies with image direction. The collection of loudspeaker signals forms a multichannel reproduction signal. The aim of this work is to relate different panning sets and multichannel signals and answer various practical questions: How well can one description be transformed to another? What is the best way to encode a collection of signal formats?

Optimal sound-field reproduction

Wavefield Synthesis (WFS) is a popular method of sound-field reproduction using dense linear arrays of loudspeakers. Per source WFS requires only one filter and one delay and gain per loudspeaker. This is very efficient, however the calculation of delay times and gains is typically based on successive approximations of analytical solutions, leading to suboptimal solutions. This work improves reproduction by optimising parameters based on physical and psychoacoustic measures.

Dylan Menzies, Filippo Fazi