Research Summary

Myself and some of my research team - October 2018.

My main research interests lie in the area of representation learning. The long-term goal of my research is to innovate techniques that can allow machines to learn from and understand the information conveyed by data and use that information to fulfil the information needs of humans. Broadly speaking this can be broken down into the following areas:

Novel representation

I have worked on a number of different approaches to creating novel representations from data. These include:

- New units for representing different data types: With Yan Zhang & Adam Prügel-Bennett, I’ve worked developing differentiable neural architectures for counting and working with unordered sets.

- Embedding and Disentanglement: I’ve worked on a number of aspects of learning joint embeddings of different modalities of data. Recently with Matthew Painter, Adam Prügel-Bennett and I have looked at how underlying latent processes might be disentangled.

- Learning architectures under constraints: In recent work with Sulaiman Sadiq, Geoff Merrett and I have started to look at how neural architectures for representation might be themselves learned to optimise against certain hardware constraints. Also related to this theme is joint work with Enrique Marquez and Mahesan Niranjan on Cascade Learning of deep networks, which allows a network to be grown from the bottom up.

- Representational translation: with Pavlos Vougiouklis I’ve worked a lot on the problem of translating structured data into a representational space that can then be translated into a human readable text; in short we trained neural networks to translate sets of <subject, predicate, object> triples into natural language descriptions.

- Acceleration features: with Yan Sun and Mark Nixon, I’ve developed novel representations for image sequences based on higher orders of motion such as acceleration and jerk. These representations can for example be used as an intermediary in recognition tasks.

- Soft Biometric representation: with Nawaf Almudhahka and Mark Nixon, I’ve developed new forms soft-biometric representation that allow photographs of people to be recognised from verbal descriptions.

Understanding representation and taking inspiration from biology

As we work towards the goal of building artificial intelligence, it is important that we understand how our models work internally, and perhaps even utilise knowledge of biological systems in their design. In this space, there are three main directions that stand out:

- Relating behaviours and internal representations of deep networks to biology: with Daniela Mihai and Ethan Harris, we’ve been working to understand what factors of a neural architecture cause the emergence of particular cell-level properties. In recent work we’ve explored how neural bottlenecks in artificial visual systems give rise to colour opponent cellular properties observed in real biological systems.

- Investigating the emergence of visual semantics: Daniela Mihai & I have been exploring what factors cause visual semantics to emerge from artificial agents parameterised by neural networks when they play a visual communication game.

- In ongoing work with colleagues as part of the EPSRC International Centre for Spatial Computational Learning, we’re considering if certain observations from biological neural networks, such as sparsity and overall architecture, could be used to help develop new ways of designing network models to better fit existing hardware, and better inform the development of future neural network hardware.

Applications of representation

- Learning representations of aerial imagery: With colleagues from Ordnance Survey and Lancaster University I’ve spent a lot of time investigating new ways of learning representations that have applications in the geospatial intelligence domain. With Iris Kramer, we’ve been investigating how deep learning and representation learning technologies can be applied to allow for the discovery of archaeological sites from aerial imagery and LiDaR data.

- Learning representations of scanned text documents. I was the Investigator of the Innovate UK funded Transcribe AI project, which looked at ways of learning representations of scanned textual documents that would allow for automated information extraction and reasoning over the information that was conveyed.

I have also been involved in numerous other projects involving both machine learning and computer vision. For example, I was part of a team that innovated a system for scanning archaeological artefacts in the form of ancient Near Eastern cylinder seals using structured light.

Through all my research, I have made a commitment to open science and reproducible results. The published outcomes of almost all my work is accompanied with open source implementations that others can view, modify and run. A large body of the outcomes of my earlier research can be found in the OpenIMAJ software project (see http://openimaj.org), which won the prestigious 2011 ACM Multimedia Open Source Software Competition. OpenIMAJ is now used by researchers and developers across the globe, and in a variety of national and international organisations.

Interests

- Representation Learning

- Differentiable Programming

- Deep Learning

- Machine Learning

- Computer Vision

- Data Understanding

- Content Mining

Current Ph.D. Students and Researchers

Harry Baker

Ph.D. Student

Feiyu Zhu

Ph.D. Student

Elliot Stein

Ph.D. Student

Shannon How

Ph.D. Student

Lei Xun

Ph.D. Student

Meet the team

I have a healthy team of researchers and Ph.D. students. Click the arrows to see them. See the contact page for information about opportunities to join the team.

Completed Ph.D. Students

Dr Ben Guthrie

Ph.D.

Meet my previous students

These are the students I have supervised to completion.

Research Projects

-

Spatial Computational Learning

The Center for Spatial Computational Learning is an international collaborative research center, bringing together experts from Imperial College, the University of Toronto, the University of California Los Angeles and the University of Southampton.

Find out more at spatialml.net.

-

TranscribeAI

-

Representation learning aims to derive features from the data without recourse to predefined feature transforms: the data set itself determines the features/representations to be extracted. The field of representation learning has advanced significantly in the last decade in tandem with understanding about how the brain processes sensory data. The value of these techniques lies in their potential to draw out underlying structure within the signal. These structures may then be used to infer meaning from the data, or as features for supervised learning.

ImageLearn will explore if we can use representation learning in the context of aerial imagery to extract useful features for inclusion in Ordnance Survey's data and mapping products.

-

SemanticNews was a mini-project funded by Semantic Media Network whose goal was to address the challenge of time-based navigation in large collections of media documents. The aim of the Semantic News project was to promote people's comprehension and assimilation of news and augmenting live broadcast news articles with information from the Semantic Web in the form of Linked Open Data (LOD).

-

ARCOMEM is about memory institutions like archives, museums, and libraries in the age of the Social Web. Memory institutions are more important now than ever: as we face greater economic and environmental challenges we need our understanding of the past to help us navigate to a sustainable future. This is a core function of democracies, but this function faces stiff new challenges in face of the Social Web, and of the radical changes in information creation, communication and citizen involvement that currently characterise our information society (e.g., there are now more social network hits than Google searches). Social media are becoming more and more pervasive in all areas of life. In the UK, for example, it is now not unknown for a government minister to answer a parliamentary question using Twitter, and this material is both ephemeral and highly contextualised, making it increasingly difficult for a political archivist to decide what to preserve. This new world challenges the relevance and power of our memory institutions. To answer these challenges, ARCOMEM's aim is to: - help transform archives into collective memories that are more tightly integrated with their community of users - exploit Social Web and the wisdom of crowds to make Web archiving a more selective and meaning-based process To do this we will provide innovative tools for archivists to help exploit the new media and make our organisational memories richer and more relevant.

We will do this in three ways:

- first we will show how social media can help archivists select material for inclusion, providing content appraisal via the social web

- second we will show how social media mining can enrich archives, moving towards structured preservation around semantic categories

- third we will look at social, community and user-based archive creation methods As results of this activity the outcomes of the ARCOMEM project will include:

- innovative models and tools for Social Web driven content appraisal and selection, and intelligent content acquisition

- novel methods for Social Web analysis, Web crawling and mining, event and topic detection and consolidation, and multimedia content mining - reusable components for archive enrichment and contextualization

- two complementary example applications, the first for media-related Web archives and the second for political archives - a standards-oriented ARCOMEM demonstration system The impact of these outcomes will be to a) reduce the risk of losing irreplaceable ephemeral web information, b) facilitate cost-efficient and effective archive creation, and c) support the creation of more valuable archives. In this way we hope to strengthen our democracies' understanding of the past, in order to better direct our present towards viable and sustainable modes of living, and thus to make a contribution to the future of Europe and beyond.

-

Knowledge and its articulations are strongly influenced by diversity in, e.g., cultural backgrounds, schools of thought, geographical contexts. Judgements, assessments and opinions, which play a crucial role in many areas of democratic societies, including politics and economics, reflect this diversity in perspective and goals. For the information on the Web (including, e.g., news and blogs) diversity - implied by the ever increasing multitude of information providers - is the reason for diverging viewpoints and conflicts. Time and evolution add a further dimension making diversity an intrinsic and unavoidable property of knowledge.

The vision inspiring LivingKnowledge is to consider diversity an asset and to make it traceable, understandable and exploitable, with the goal to improve navigation and search in very large multimodal datasets (e.g., the Web itself). LivingKnowledge will study the effect of diversity and time on opinions and bias, a topic with high potential for social and economic exploitation. We envisage a future where search and navigation tools (e.g., search engines) will automatically classify and organize opinions and bias (about, e.g., global warming or the Olympic games in China) and, therefore, will produce more insightful, better organized, easier-to-understand output.

LivingKnowledge employs interdisciplinary competences from, e.g., philosophy of science, cognitive science, library science and semiotics. The proposed solution is based on the foundational notions of context and its ability to localize meaning, and the notion of facet, as from library science, and its ability to organize knowledge as a set of interoperable components (i.e., facets). The project will construct a very large testbed, integrating many years of Web history and value-added knowledge, state-of-the-art search technology and the results of the project. The testbed will be made available for experimentation, dissemination, and exploitation.

The overall goal of the LivingKnowledge project is to bring a new quality into search and knowledge management technology, which makes search results more concise, complete and contextualised. On a provisional basis, we take as referring to the process of compacting knowledge into digestible elements, completeness as meaning the provision of comprehensive knowledge that reflects the inherent diversity of the data, and contextualisation as indicating everything that allows us to understand and interpret this diversity.

-

The aim of LiveMemories was to scale up content extraction techniques towards very large scale extraction from multimedia sources, setting the scene for a Content Management Platform for Trentino; using this information to support new ways of linking, summarizing and classifying data in a new generation of digital memories which are `alive’ and user-centered; and to turn the creation of such memories into a communal web activity. Achieving these objectives will make Trento a key player in the new Web Science Initiative, digital memories, and Web 2.0, thanks also to the involvement of Southampton. But LiveMemories is also intended to have a social and cultural impact besides the scientific one: through the collection, analysis and preservation of digital memories of Trentino; by facilitating and encouraging the preservation of such community memories; and the fostering of new forms of community, and enrichment of our cultural and social heritage.

In the digital age, our records of past and present are growing at an unprecedented pace. Huge efforts are under way in order to digitize data now on analogical support; at the same time, low-cost devices to create records in the form of e.g. images, videos, and text are now widespread, such as digital cameras or mobile phones.

This wealth of data, when combined with new technologies for sharing data through platforms such as Flickr, Facebook, or the blogs, open up completely new, huge opportunities of access to memory and of communal participation to its experience.

-

LifeGuide is an interdisciplinary project between the ECS and the Schools of Psychology at UCL and Southampton. The aim is to investigate whether it is possible to develop a software system that allows health professionals to easily author interactive websites (known as "behavioural interventions") that can help influence people's behaviour. For example, one possible intervention may aim to help it's users give up smoking, or reduce alcohol consumption. The project has many challenges; not least of which are all the HCI and usability considerations in designing software that allows novices to perform what can be a rather complex task. During my time on the project, I designed the overall architecture, wrote a large portion of the back-end software (based on JQTI, developed in the AsDel project described below), and managed the day-to-day finances and running of the project team in ECS.

-

The AsDel project was funded by JISC to develop an assessment delivery engine based on the IMS QTI V2.1 specification. My role on the project was to design the overall architecture and manage the programmer employed on the project (whilst doing some of the coding myself and doing independent image retrieval research!). One particular element of the project was the development of a software library for processing QTI XML documents, called JQTI. JQTI seems to have become rather popular in the QTI community and has seen significant uptake in other projects. As part of a piece of recent consultancy work, I was employed to update the JQTI library to cover the entirety of the QTI specification (rather than just the assessment parts). I also developed a new web-based system, QTIEngine for running QTI questions and assessments that removed reference to much of the legacy code used in the AsDel project for handling presentation of questions. This engine also contained a plugin for handling questions with advanced mathematical content.

-

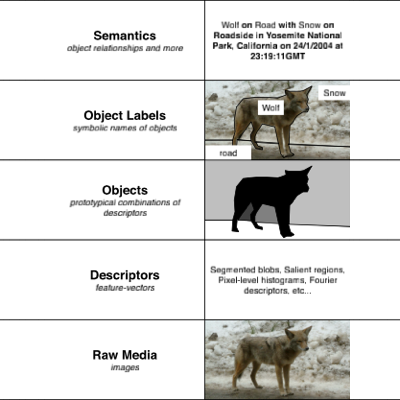

The semantic gap project aimed to explore the problem of the semantic gap in image retrieval. In essence, the project had two parts; in the first part our collaborators surveyed and collected data about queries from a number of professional image archives. This data was then analysed in order to give us some insight into what professional image searchers actually want. The second phase of the project was more technological and involved the development of techniques for actually helping the searchers. In particular, we looked at ways of making large unannotated image collections accessible to retrieval through augmented browsing and semantic search (based on improvements in the semantic space developed in my PhD). We also developed techniques based on semantic-web technologies that used inferencing for automatic query expansion.

-

I began by investigating the use of interest points and salient regions for robust content-based retrieval and matching. The latter parts of my research increasing focused on the problem of the semantic gap in image retrieval, and I began to investigate and develop novel methodologies for providing semantic search of unannotated imagery.

In particular, I developed a linear-algebraic semantic space representation in which words and images were projected into a large vector space such that images were placed near to the words that semantically described their content. The best part of this representation is that it allows unannotated images to be projected in, and by analysing their placement it is possible to determine the words that are most likely associated with the respective images.