It’s my second month as a content designer for the OneWeb team and I’ve been learning about user research with the wonderful Maya Wiseman. Maya is a user experience researcher who has worked with Llibertat on OneWeb here at Southampton. She has also worked with other higher education institutions and the government. Here are some of my key takeaways from our time with her.

User research is important

According to usability.gov user research focuses on:

Understanding behaviours, needs, and motivations through observation techniques, task analysis, and other feedback methodologies.

Content designers put user needs front and centre. We’re 30 years on from the creation of the web and user experience is now a mature field. We can’t therefore underestimate the sophistication of today’s web users. They have:

- little time

- many distractions

- high expectations

An often quoted metric from the Nielsen Norman Group confirms how little written content users actually read on the average web page:

On the average web page, users have time to read at most 28% of the words during an average visit; 20% is more likely.

This makes relevance and usefulness a high priority for any organisation. Since finding information is the main activity of a visitor to our website, we need to help them do or find what they need. User research gives us the information to design content that meets their requirements.

Useful content is good for business

If our content isn’t meeting user needs we’re essentially operating in broadcast mode, holding our breath and hoping for the best. That’s bad for the user and bad for business.

So making content useful is mutually beneficial. You’re respecting users by giving them what they need and you’re valuing their time. Plus, you’re meeting the objectives of the business.

The organisation benefits by:

- saving time

- improving productivity

- avoiding rework costs

- enhancing reputation

- generating trust

Really, it is a no-brainer!

We are not our users

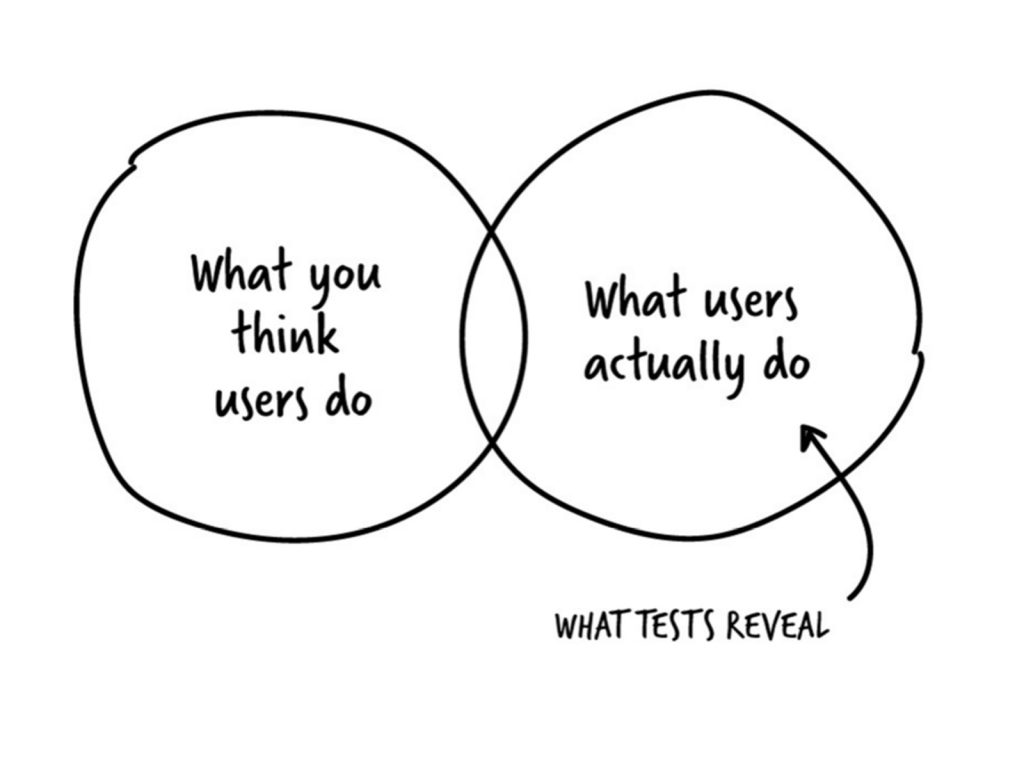

Nor are we mind-readers. If we don’t do research we’re making guesses about who is using our site and what their needs are. Thinking hard about what they might need, while commendable, is meaningless. It’s evidence we want. We wouldn’t make assumptions about a piece of academic research before the findings are known, so why do it with our users?

Source: 10 steps to start doing user research

And we mustn’t forget that our users are human beings – complex, unique and surprising. As Maya says: ‘We don’t know what they don’t know.’ Visually impaired users, for example, have specific requirements which include catering for screen-reading software.

Informing our actions using data is the University’s lifeblood so it makes perfect sense to align this approach with our content development.

Don’t ask users what they think

Short and sweet, but what a user thinks and what a user does are often radically different. It’s a mistake some people make and they’re left scratching their heads when applying their findings changes nothing. This is why observation is an essential technique in the user research toolkit.

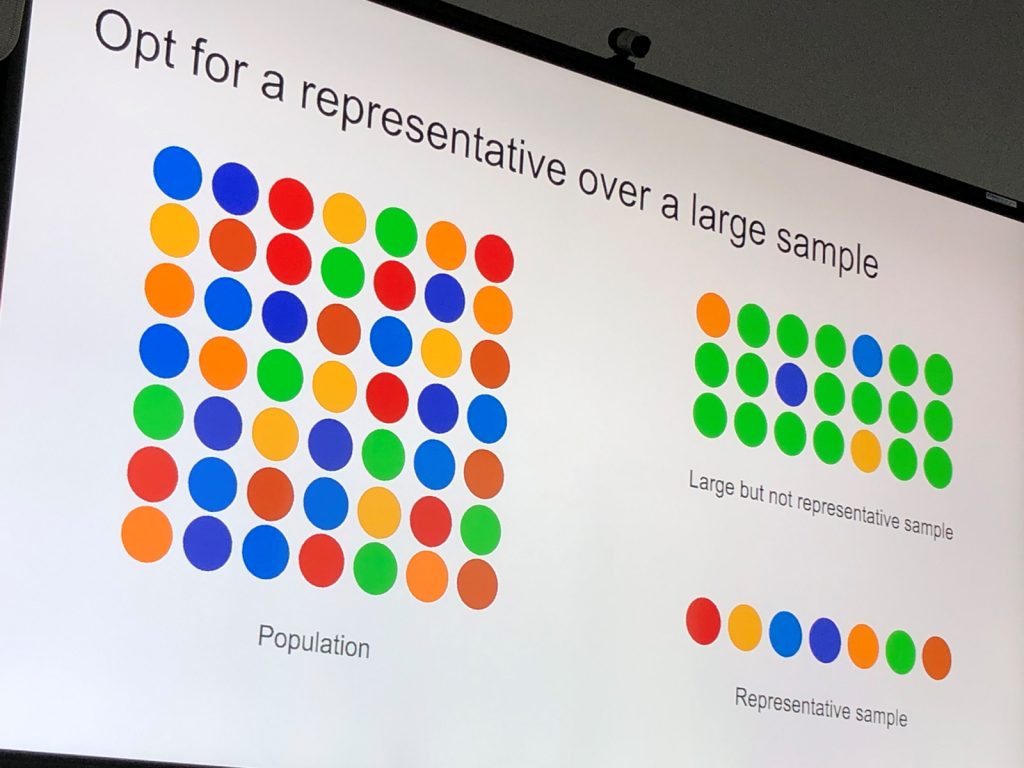

More participants isn’t a guarantee of better results

When you’re planning your recruitment brief, recruiting more participants won’t necessarily mean you’ll have a better quality research outcome. Making sure you have a representative sample is more valuable.

Choosing the right timings, location and duration for your research sessions is also crucial – what works well for current engineering postgraduates may not work well for graduate-entry nursing enquirers. Mature students who might be working parents, for example, will have a host of contextual distractions. It’s also important to factor these distractions into the content we create for them.

Representative samples are important to ensure the right people are part of your group

Source: courtesy of Maya Wiseman

Defining research goals and questions are must-do’s

Goals are there to identify, understand and gauge a problem by answering a series of questions such as:

- how are people using this page?

- what do they want from it?

- why aren’t they completing their task?

Good questions are about users rather than services, and they must have an implication for the work. If they don’t, rework or remove them entirely.

It’s fine to mix things up

Apparently using mixed methods is on trend! But it’s true that most projects would benefit from a minimum of several approaches. It’s also wise to carefully consider the most appropriate method for your scenario rather than plumping for techniques you are most familiar with.

For example, using both quantitative and qualitative techniques provides very different but equally valuable and often complementary findings.

Some methods are outlined in this table. Each has its place in helping surface the detail needed to inform content development.

| Contextual research | Observe users in their usual environment to identify evidence of need and behaviour |

| Interviews | Ask users to describe their situation, beliefs, experiences |

| Usability testing | Nudge users to do tasks, or observe unprompted interactions |

| Participative design | Work with users to design creative solutions or ideas |

| Card sorting and tree testing | See how users categorise or navigate information |

| Survey | Uncover user problems, behaviours, needs etc. |

| Eye tracking | Identify users’ reading patterns |

| AB or variation testing | Find out which version of a design is more effective |

| Pop-up research | Gather insights from users on the spot |

Staying useful is a continual evolution

One constant we can be sure of is change, and in my new role it’s all about embracing it. After all, the habits and behaviours of our web visitors, whether they’re prospective students, members of our local community or potential research partners, do not remain the same.

By putting users at the heart of our process we can ensure our content continually evolves and stays useful.

Proving value

As content designers we recognise that user research is a team sport and can be hugely beneficial to the University. The more we learn about our users and the more we share our knowledge, the more value we can deliver.

As a team, we test and iterate content with users regularly. We want to ensure that they can find it, understand it and act on it. If they struggle, we tweak and refine until it perfectly meets their user needs – or, at least, a bit more perfectly than it did before.

Moving forward, we want to shout more loudly about our successes. We want to be tracking metrics that matter and prove the value of user-centred content to the University. We want to share what we’ve learnt about our users and ensure that those findings inform every part of the University’s communications.

If we don’t meet user needs, we can’t expect to meet business needs.

A big thank you to Maya and everyone who contributed to this post. Some further user research reading (courtesy of Maya):